Junction grammar

This article possibly contains original research. (November 2015) |

Junction grammar is a descriptive model of language developed during the 1960s by Eldon G. Lytle (1936–2010)[14].

Junction grammar is based on the premise that the meaning of language can be described and precisely codified by the way language elements are joined.

The model was used during the 1960s and 1970s in the attempt to create a functional computer-assisted translation system. It has also been used for linguistic analysis in the language instruction field.

Background

[edit]Early generative grammars dealt with language from a syntactic perspective, i.e. as the problem presented by the task of creating rules able to combine words into well-formed (i.e., grammatical) sentences. The rules used by these grammars were referred to as phrase-structure rules (P-rules). It was soon apparent, however, that a generative component composed solely of P-rules could not generate a wide variety of commonly occurring sentence types. In response to this dilemma, Harris proposed an explanation:

Some of the cruces in descriptive linguistics have been due to the search for a constituent analysis in sentence types where this does not exist because the sentences are transformationally derived from each other[1]

Chomsky's model of syntax - transformational grammar -picked up on this line of reasoning and added a supplementary set of transformations (T-rules). T-rules effected combinations and permutations of words in step-wise fashion to fill in structural gaps where P-rules alone could not generate the sentences which Harris had pointed out as problems. The structural forms generated by P-rules alone were said to constitute deep structure. Surface structure was then derived transformationally by T-rules from the kernel structures first generated by the operation of P-rules. In this way, Chomsky proposed to generate an infinite number of sentences using finite means (the closed sets of P-rules and T-rules). Syntax-based models of this vintage set semantics and phonology apart as linguistic processes to be approached separately.

Advent of junction grammar

[edit]Enter from the sidelines under these circumstances junction grammar (JG),[2] a model of natural language created by Eldon Lytle in the late 1960s and early 1970s. Junction grammar did not propose an amendment to Chomsky’s model of syntax, but purported to eliminate the need for transformations altogether through theoretical innovation and a novel design for generative grammars. Innovations fundamental to the new approach rejected common-place reliance on existing mathematics and formal language theory as tools for linguistic modeling and description "in deference to the intuition of more fundamental structuring in the body and in natural language itself", which appeared to provide a "universal base for linguistic description" - not only for natural language but also for the synthetic notation systems employed at the time for linguistic description. Implementation of the novelties in question entailed:

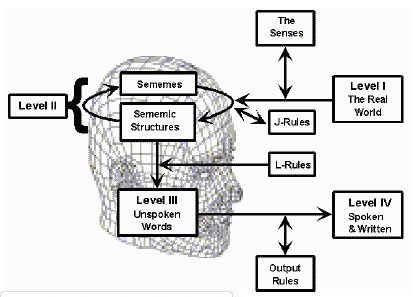

- Recasting the generative component in a semantic mold. This entailed dispensing with all operationally-deprived (and hence meaningless[3]) concatenations of P-rules and replacing them with junction rules (J-rules). J-rules operationalized for the first time a set of structural relations having universal syntacto-semantic significance and were based on the proposition that natural language "has its own math". Indeed, the position of JG was that lucubrations advanced by Chomsky and others as to whether this or that rung of a given formal language hierarchy was in principle capable of generating the sentences of natural language were ultimately circular, natural language being the thing which had created them all. Lytle held, in effect, that natural language is the meta-language upon which all forms of synthetic notation (including mathematics) supervene.[4]

- Complementing the base with biologically-oriented tracts (sometimes referred to in early JG literature as levels of representation) specializing in the distinct data types required to support lexicalization, articulation, orthography, etc. Junction theory explicitly prohibited the intermingling of distinct data types in a single representation, noting, for example, that one could not reasonably expect the vocal tract to have use for semantic data nor would the tract executing movements in the writing hand have any use for data driving the musculature of the vocal tract.

- Implementing Saussure's concept of signified-signifier linkages[5] by constructing coding grammars to transpose the structuring of one tract to the data type of another, as for example the transposition of J-rule structuring into the lexical strings required for writing or articulation. The essence of the departure from Chomsky's model in this case was that coding between biologically grounded tracts supplanted interpretation of deep structure in the abstract.

In sum, the junction grammar model of language moved the base into a sub-verbal semantic domain, added universally relevant linguistic operators to generation rules - thus, for example, solving the quandary of how to handle conjunction - incorporated auxiliary tracts together with specialized data types for voice, audition, etc., and added coding grammars to physically interface between tracts.

Transformations and their representations are superseded in JG

[edit]The right-left, top-down representational format which forced everything into one big tree was dispensed with in favor of an ensemble of interfacing representations which employed as many data-specific descriptions as necessary to capture the functionality of the diverse neurobiological manifestations of linguistic behavior being dealt with. Because target structuring was generable directly by the powerful J-rule base component and its array of linguistic operators, structure-oriented transformations required to combine kernel sentences or otherwise manipulate constituent structure were no longer required. Transformations formulated to generate correct word order and otherwise massage surface strings were supplanted by coding algorithms or grammars in the JG model. In general comparison, whereas Chomsky's model of syntax was by design derivative (speaking of its roots in existing forms of notation), derivational, and manipulative, the JG model was seminal (speaking of its formal novelty), modular, and transpositional. Despite polar differences, however, "Chomsky's objective of generating an infinite number of sentences with finite means, remained firmly intact in JG, as did the presumption of the fundamental innateness of natural language in the normal speaker/hearer."

J-rules

[edit]The base/junction rules (J-rules) of junction grammars are a set of algebraic formulas which generate for natural language what is akin to the Periodic Table of elements in chemistry, namely, an enumeration of well-formed linguistic structures[6] sometimes referred to as the periodic chart of constituent structures (PCCS).[7] J-rules generate composite structures consisting of the labeled operands entering into them plus the relations established by operators, and assign a category to the composite produced, to wit: X ∘ Y ≐ Z. Composites thus generated become potential operands for subsequent operations. The resulting algebra may be represented in string form (e.g., infix, postfix, reverse polish) or graphed as a branching diagram (J-tree).

Operators

[edit]The universal operators utilized by these rules are subjunction (*), conjunction (&), and adjunction (+), plus subtypes required under particular circumstances, e.g., restrictive (.*) versus non-restrictive (=*=) under subjunction. Expressed in more familiar terms, subjunction joins modifiers and complements to their heads, conjunction bonds constituents of homogeneous category which are in some respect similar, and adjunction[8] attaches relations and processes to their operands to form predicates, statements, and relational phrases. Supplemental operators effect the requirements of data management in mental modeling and conversational settings, corresponding in large part to the conventional classification of deixis.[9]

Operands

[edit]The operands of the base are drawn from a dictionary of sememes (meaningful concepts) which are by definition non-lexical in JG and may be plausibly viewed as electromagnetic signatures in their neurobiological setting[10] arising in connection with the formation of the mental models which provide the content and sensory linkages for their meanings.

Coding grammars

[edit]While the link between signified and signifier (as per Saussure) may be separately represented in a junction grammar, the interfacing between J-rule structuring and the coding extant in other components of the model is provided by context-sensitive coding grammars formulated as algorithms in an appropriate pattern matching language. For example, JG incorporates a lexical coding grammar consisting of lexical rules (L-rules) which encodes unordered sememic structuring as ordered lexical strings in a separate coding space.[11] A subsequent coding provides either the distinct patterning of the voice contour, or the formatting and punctuation of printed material.

Nature of JG analysis

[edit]With the foregoing as a frame of reference, we draw renewed attention to significant differences between JG sentence analysis and conventional syntactic analysis. The more familiar syntax approach analyzes phrases and sentences in terms of outward (surface) appearance, i.e. in terms of the words which they contain and how intuition groups them. Structural diagrams reflecting this method strive to depict constituent clusters in the word stream supplemented by labels, perhaps, or other information of focal interest to the analyst - some of it perhaps semantic. A change in word order requires that the diagram be changed.

In contrast, the JG approach, while taking note of the words, looks beyond them to the base constructions from which they have presumably been encoded, concentrating all the while on the semantic effects associated with the constituents and their structural nuances. JG diagrams (J-trees), therefore, are not directly representative of the word stream but, rather, of rational constructs in the neural mass having linkage with the words which are read or written. This means that a variety of structuring detail made explicit in J-trees is only implicit in the word stream. Conversely, it means that certain lexical detail made explicit in the word stream is only implicit in J-trees. For example, depending upon the ordering rules of the lexical coding grammar in play and the discourse context of the sentence - the same J-tree may yield alternative word orders in the lexical coding space.

The overall effect is that, inasmuch as JG uses coding rather than derivation as a bridge between levels of representation, much that models of syntax have been preoccupied with in deriving surface structure from deep structure (movement, deletion, insertion, etc.) is taken over in JG by coding operations.

Early application

[edit]The first junction grammar was worked out by Eldon Lytle in connection with his Ph.D. dissertation during the late 1960s,[12] in which he constructed such a grammar for the analysis of structural derivation in Russian. That grammar relegated the data to four levels of representation, corresponding to:

- Level 1 - real world structuring

- Level 2 - syntacto-semantic structuring reflective of mental activity

- Level 3 - lexical code transposed from level 2

- Level 4 - articulatory/orthographic code transposed from level 3

Lytle employed one of the junction operators (subjunction) as a formal device to impose the properties of a governing category upon existing structure to obtain derived forms (e.g., transform-ation - Noun * Verb).

Early literature

[edit]Early literature on JG was published in Junction Theory and Application, the journal of the BYU Translation Sciences Institute (TSI), and the proceedings of the university's Annual Linguistic Symposium.[13] More widely distributed overviews and analyses of junction theory first appeared in monograph form under Mouton's Janua Linguarum Series,[14] followed by papers and research reports presented at LACUS forum proceedings[15] and linguistic conferences abroad.[16]

Meanwhile, others tested the model for applicability to natural languages distanced from English. The latter studies applied junction analysis to particular languages of diverse families, including Finnish,[17] Samoan,[18] Korean,[19] Japanese,[20][21] and Russian.[22] Chinese, French, German, Spanish, Portuguese were added to this list in due course. Following these studies, it was concluded that the J-rules comprising the syntacto-semantic base of the model proffered a pool of structural possibilities from which all natural languages draw, but that none necessarily uses them all nor the same subset.

Early NLP application

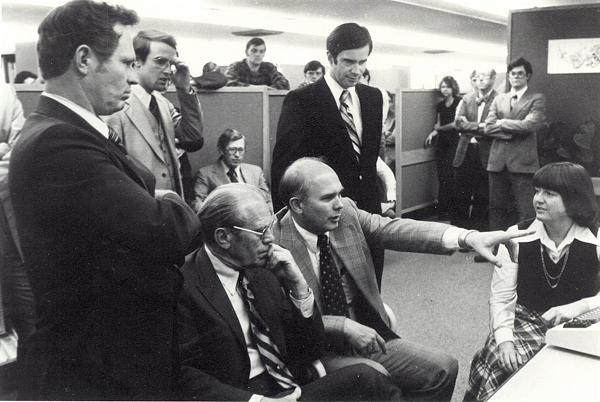

[edit]During the early 1970s, at the urging and under the auspices of the Department of Defense, Lytle and a team of colleagues conducted research at BYU in computer-assisted, human-interactive translation in which junction grammars were subjected to formalization[23][24][25] and applied to the problem of English-Russian translation. When positive results evinced proof of concept and a software prototype, funds from the private sector were invested to develop and test a one-to-many system based on the same translation model in expanded form - in particular, translations were synthesized from J-trees of English obtained via human interaction into Russian, Spanish, French, German, Portuguese, and Chinese.[26] Just prior to his inauguration as president, Gerald Ford and an entourage of VIPs visited the development site to receive a briefing on the concept and progress of the work.

At the conclusion of this endeavor, an alpha model of the software translated a book submitted for test purposes and yielded translations in the cited target languages. While significant post-editing was required, suggesting - among other improvements - that a second, language-specific interaction may well improve the design, the utility of computers as a useful translation aid, both in standardizing the use of terminology and expediting the overall translation process had been demonstrated.[27]

Junction phonology

[edit]JG adherents soon observed that the structural particulars of adjunction, conjunction, and subjunction are relevant beyond conventional linguistic structuring. More specifically, they lend themselves to such diverse structural scenarios as spousal interaction, departmental interaction in institutions, and a host of other real-world phenomena. In response to this observation, Lytle developed a method of phonological representation based on phonemic operands and junction operators.[28] This method of representation was subsequently utilized as the basis for synthesizing speech contours which reflected the structure of the source text.[29][30]

Pedagogy

[edit]JG proved to be classroom friendly, not only because its base structures were more intuitive, comprehensive, and explicit than traditional forms of diagramming, but also because their connection to overt forms of language were straightforward. Students quickly picked up on the challenge presented by the structural predictions of the PCCS and undertook to assist in verifying them. Among the memorable sentence types brought forward in class to challenge instructors and the chart were:

- "Come on up from out of down in under there."[31]

- "Watermelon is a fruit that you can eat, drink, and wash your face and hands in."

- "She drank more of her milkshake than I did of my exotic herbal tea."[32]

Studies were conducted to determine whether exposure to the JG method of diagramming was useful as a point of reference in teaching and learning foreign languages. Olson and Tuttle found that the answer was affirmative.[33][34]

Educational software

[edit]Subsequent to his experience with computer-assisted translation and voice synthesis, Lytle undertook the development of JG-based educational software in the private sector. To facilitate his objectives he designed what is referred to as JG Markup Notation and created a pattern matching language (JGPL) to complement it.[35] This endeavor relied on the escalating power of micro-computers to field JG-powered applications able to provide constructive feedback to student writers and their teachers in a writing-lab context. The project culminated in a successful field test in a district of the public school system[36] and ultimately a study conducted jointly with the Educational Testing Service (ETS) to evaluate the potential of such software to holistically score student written products. The joint conclusion:

...computer analysis of student ways can provide a level of detail in feedback to students, teachers, and others that is not possible using human readers alone. This kind of feedback has important implications for instruction in English composition. Moreover, computer analysis can provide detailed feedback on many written products, even lengthy ones; a teacher of English will normally provide detailed feedback on only a few brief essays.[37]

In more recent research, Millett has independently demonstrated the ability of the same JG-based software to evaluate the writing of ESL students of mixed nationalities.[38] Owing to its demonstrated potential for educational assessment, overtures have been made to declare JGPL and its associated software applications - The WordMap Writing-Aids Software Ensemble - ‘open source’ so that it may serve as an expandable and adaptable public educational resource. This proposal is presently 'under advisement.' Meanwhile, to test the utility of JG-related writing assessment in an internet environment, the JGPL analysis engine has been made available free of charge for experimentation in an online writing-lab setting.[15]

Sample J-tree

[edit]

This tree was constructed in a tutorial setting equipped with experimental software for computer-aided JG diagramming. The explicit specification of junction operators on the squiggly lines connecting the node labels. This is one aspect of JG linguistic description which sets it apart from other models and from which its name derives. Conventional branching diagrams may also be used, but in any case the junction operator must be written between the nodes serving as its operands. "Junction theory holds that linguistic structures written without junctions have no more meaning/substance than concatenations of algebraic operands written without any indication of the operations to be performed with them." Because of its algebraic format - and owing to its use of non-verbal formatives - JG base description has of late been dubbed MindMath.

Review of theoretical and practical highlights

[edit]- As formerly noted, junction grammar does not analyze or describe natural language with respect to formal, synthetic languages (whether separately or arranged in some hierarchy), but considers all of these to be derivative in the sense that they themselves are defined with reference to one natural language or another. Junction grammar construes this dependency upon natural language to mean that natural language is itself the meta-language upon which all formal, synthetic languages (including all forms of mathematics and computer programming languages) supervene. From this it follows that to analyze the meta-language with derivative tools is circular and can yield no more in a particular case than the subset of the source which it circumscribes. For this reason, junction grammar holds that "natural language has its own math." To discover the rudiments of this foundational math and expand upon it has been the objective of proponents since its inception.

- Junction theory further insists that natural language modeling be done with reference to the biology of the organism in which the language manifests. Grammars fashioned in this way use levels of representation or tracts in which purpose-specific transpositions of language occur and are separately coded in their peculiar data types - and must therefore be separately described. In a word, JG does not permit intermixture of data types in a single representation except where used to gloss correspondents in related tracts, i.e., to specify context-sensitivity between types.

- "Junction theory anticipates that the various interfaces between tracts and their respective coding grammars will correspond to linguistic R&D now subsumed under distinct titles and demonstrate the utility of dealing with them as the integral components of a larger unified model." For example, the sememic data of J-trees lacks the ambiguity and imprecision of lexical strings and is therefore an attractive candidate for use in rational forms of mental modeling. The construction of algorithms to encode this data type as lexical strings forces the analyst to confront the subtleties of morphology (e.g., word-building) and the logic of similarity and difference associated with the principles of semiotics. By way of further illustration: The data type developed for the lexical coding space (tract) of the JG model at its inception served readily as input to the coding algorithms required for voice synthesis (as described above). The overall perspective which emerged in this case was a trace from phonemic representation, to phonetic representation, to the computerized generation of wave forms simulating voice. Junction theory argues for the utility of operating from the perspective of a comprehensive, unified model with interlocking components - at least to the extent that the big picture comes into focus.

Cross-fertilization

[edit]Some theoreticians have suggested that it would be productive to merge certain features of junction grammar with other models. Millett and Lonsdale, in fact, have proposed an expansion of tree adjoining grammar (TAG) to create junction trees.[39]

JG upgrade model

[edit]Orders of specificity

[edit]In planning for systematic expansion of the JG model of language, "elevator shafts" were included in its original layout for the eventual incorporation of modules to deal with such phenomena as sensation, cognition, mental modeling, and communication - all of these being considered integral to the operation of language in its natural setting. Inasmuch as the addition of modules entails addition of data types and their dictionaries, reference was made in the literature to such future expansion as a function of orders of specificity, with each module or significant rule refinement ascending to a higher order.[40]

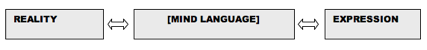

Language, thought, and reality

[edit]JG had - through its use of non-verbal data types - tacitly implemented Whorf's expanded definition of language from its inception. To wit:

...The linguistic order embraces all symbolism, all symbolic process, all process of reference and of logic...[41]

At that point where formal development of the module for level 1 (the "real" world) was contemplated, it became apparent that JG was graduating from a model managing the connection between mind and expression as a matter of linkage between familiar and readily representable data types (e.g., between the sememic data of the mind tract and those required for the vocal tract) ... to a model which must now also make explicit the connection between mind and reality in a general way among a profusion of data types systematically written upon by mind in the "cold and dreary" world itself. The fact of the matter was that even though level 1 had been occupying space in the schematic from its inception, with the exception of voice synthesis, no order of specificity had as yet been implemented in JG to formalize the linkage between sememic data and forms of physical reality.

That the linkage existed was simply a matter of observation: Each time an instruction was given and carried out, for example, the structuring specified by the instruction was realized in the physical media to which the terms of the language referred. Ditto for the relation between recipes and cakes, blueprints and houses, constitutions and governments, etc. In each case, the linguistic relations became real, with the referents to which they applied representing themselves - a circumstance referred to in the parlance of JG as reflexive symbolism. As the ramifications of this scenario were examined, it became apparent that the emergent JG upgrade model was now making direct contact with Benjamin Whorf's full conceptual model, which saw structuring in the world at large as a physical extension of the language in mind, while writing and speech - by way of comparison - were rational extensions of language in mind. To quote Whorf:

All that I have to say on the subject that may be new is of the premonition in language of the unknown, vaster world - that world of which the physical is but a surface or skin, and yet which we are in, and belong to ... Speech is the best show man puts on ... But we suspect the watching Gods perceive that the order in which his amazing set of tricks builds up to a great climax has been stolen - from the Universe! [42]

Here then was the explanation for the relevance of JG's fundamental constituent relations to external relationships and the reason why Lytle had been able to represent articulatory structuring with junction operations. There is indeed a linguistic linkage between mind and reality ... and hence, theoretically, a linguistic description for every structured phenomenon. This, perhaps, portends the ultimate ascent of linguistic science to a level of prominence previously envisioned only by Whorf.

Linking to reality

[edit]To formally implement the linkage in question as a feature of the JG upgrade model, it was necessary to add notational symbolism able to describe the nature of the coding which transpires at the mind-reality interface. To this end, symbols were introduced to signify the mental processes which are used to create instruction and to apply it, as well as the act of describing such processes. To this end, corresponding operators were added to the JG inventory for:

- prescription (formula creation)

- inscription (formula implementation)

- description (discussion)

Other symbolism was incorporated to signify authorship ( Kx ), as well as the language being employed (Lx ) vis-a-vis the language continuum (the global blend of ideolects, dialects, and languages), as well as the data types operative as media to be structured in the physical domain (Mx).[43]

Versatility of the notation

[edit]These notational addenda, when systematically organized and augmented with other pertinent formal devices, enable the linguist to write expressions for such diverse activities as mental modeling, conversation, putting one's words into action, and use of the empirical method for discovery. Inasmuch as the system of classical junction rules can be derived directly from the new, more comprehensive symbolism, it is clear that the enhancements in question are a natural extension of the original model.

References

[edit]- ^ Harris, Zellig S. (1981). "Co-occurrence and transformation." In Papers in Syntax. Dordrecht: Reidel, 1 edition. Synthese language library, vol.14, Henry Hiz, ed.

- ^ Baird, Rey L. (1972) “Essentials of Junction Grammar.” LINGUISTICS SYMPOSIUM: AUTOMATIC LANGUAGE PROCESSING, 30–31 March 1972. Provo, Utah : BYU Language Research Center. [1]

- ^ Junction theory held that a simple concatenation of operands in linguistic formulations was as 'meaningless' as any string of mathematical operands devoid of operators.

- ^ Lytle, Eldon G. (1974) “A Summary Comparison of Junction Grammar and Transformational Grammar.” First published in LINGUISTICS SYMPOSIUM: AUTOMATED LANGUAGE PROCESSING, 9 April 1974. Republished in JUNCTION THEORY AND APPLICATION, V. 2, no. 2, Spring 1979. Provo, Utah : BYU Translation Sciences Institute [2]

- ^ De Saussure, Ferdinand. Course in General Linguistics. (1966) New York:McGraw-Hill Book Company, 2 edition.

- ^ Melby, Alan K. 1985. “Generalization and prediction of syntactic patterns in junction grammar”. In Linguistics and Philosophy. Festschrift for Rulon S. Wells, Makkai, Adam and Alan K. Melby (eds.).

- ^ See Lytle, Eldon G.(2009) LANGUAGE in Capital Letters (eBook edition), Chapters 4, 8. Rose Valley, NV: Linguistic Technologies, Inc. [3]

- ^ Not to be confused with ‘Chomskyan adjunction.’

- ^ Lytle, Eldon G. (1979) “Doing More with Structure.” JUNCTION THEORY AND APPLICATION, V. 2, no. 2, Spring 1979. Provo, Utah : BYU Translation Sciences Institute. [4]

- ^ See Presman, A.S. (1970) Electromagnetic Fields and Life. New York:Plenum Press, 1 edition. Translation of “Elektromagnitnye polia i zhivaia priroda” by F.L. Sinclair; edited by Frank A. Brown, Jr.

- ^ See, for example, Billings, Floyd and Thompson, Tracy (1972). “Proposals for Ordering Well-formed Syntactic Statements.” LINGUISTICS SYMPOSIUM: AUTOMATIC LANGUAGE PROCESSING, 30–31 March 1972. Provo, Utah: BYU Language Research Center.[5]

- ^ Lytle, Eldon G. (1972) Structural Derivation in Russian. Ph.D. Dissertation. University of Illinois, Champaign/Urbana

- ^ This symposium was sponsored in the early 1970s either by TSI or the Language Research Center and subsequently by the Deseret Language and Linguistics Society. Materials published by these institutions have been catalogued and are currently available in the BYU Library.

- ^ Lytle, Eldon G. (1974). A Grammar of Subordinate Structures in English. Mouton (Janua Linguarum: Series Practica, 175, Studia Memoriae Nicolai Van Wijk Dedicata), The Hague, Paris.

- ^ Lytle, Eldon G. (1979) “Junction Grammar: Theory and Application.” THE SIXTH ANNUAL LACUS FORUM. (Columbia, SC: Hornbeam Press, Incorporated)

- ^ Melby, Alan K. (1974) "Some Aspects of Junction Grammar." Paper read as an invited lecture at the International Summer School of Computational Linguistics held in Pisa, Italy.

- ^ Luthy, Melvin J. (1982) “Junction Grammar Analysis of Finnish Passives.” Ural-Altaic Yearbook 54. Gyula Decsy & A.J.E. Bodrogligeti, eds. (Bloomington: Eurolingua)

- ^ Baird, Rey L. (1974) "A Variable Recursive Mechanism in Samoan. Ph.D. Dissertation: Indiana University

- ^ Lee, Kwang Oo (1980) A Contrastive Analysis Between Korean and English Relative Clauses. MA thesis: BYU

- ^ Higgins, Seiko T. (1972) A Comparison of Homogeneous and Heterogeneous Subjunction in Japanese and English. MA thesis: BYU

- ^ Suzuki, Kenji (1973) Junction Grammar as Applied to Japanese Synthesis. MA thesis:BYU

- ^ Gibb, Daryl K. (1970) An application to mechanical translation of a variable recursive algorithm based on the operations of union and intersection. MA thesis: BYU

- ^ Melby, Alan K. (1972). “A Formalization of Junction Grammar.” LINGUISTICS SYMPOSIUM: AUTOMATIC LANGUAGE PROCESSING, 30–31 March 1972. Provo, Utah: BYU Language Research Center

- ^ Lytle, Eldon and Packard, Dennis (1974). “Junction Grammar as a Base for Natural Language Processing.” First published in LINGUISTIC SYMPOSIUM: AUTOMATED LANGUAGE PROCESSING, 9 April 1974. Republished in JUNCTION THEORY AND APPLICATION, V. 2, no. 2, Spring 1979. Provo, Utah: BYU Translation Sciences Institute)”

- ^ Norman, Theodore A. (1972). “Random Generation of Well-formed Syntactic Statements.” LINGUISTICS SYMPOSIUM: AUTOMATIC LANGUAGE PROCESSING, 30–31 March 1972. Provo, Utah: BYU Language Research Center.

- ^ Melby, Alan K. (1973) "Junction Grammar and Machine Translation." Paper read at the International Conference on Computational Linguistics held in Pisa, Italy.

- ^ ALP Systems, a private corporation subsequently acquired rights from BYU to commercialize the technologies developed under its computer-interactive translation research programs. ALP Systems later offered translation services as ALPNET.

- ^ Lytle, Eldon G. (1976). “Junction Grammar as a Base for Dynamic Phonological Representation.” LANGUAGE & LINGUISTICS SYMPOSIUM, 22–23 March 1976. Provo, Utah: BYU Language Research Center.

- ^ Melby, Alan K., Strong, William J., Lytle, Eldon G., Millett, Ronald P. . (1976) "Evaluation of Rule-Generated Pitch Contours" Paper for the 92nd Acoustical Society of America Meeting

- ^ Melby, Alan K. (1976) Pitch Contour Generation in Speech Synthesis: A Junction Grammar Approach. Ph.D. Dissertation, published as AJCL microfiche #60, Spring 1977.

- ^ Lytle deemed this sentence - a verb followed by nine prepositions and particles - of sufficient interest to analyze in a published article. See Lytle, Eldon G.(1985) “Come on up.” LINGUISTICS AND PHILOSOPHY. Essays in honor of Rulon S. Wells. Amsterdam/Philadelphia: John Benjamins Publishing Co. [6]

- ^ Lytle published a lengthy response to this challenge. See Lytle, Eldon G.(1975) “A Junction Grammar Analysis of Quantifiers.” IMPLEMENTATION GUIDE. BYU Translation Sciences Institute. [7]

- ^ Olsen, Royden S. and Tuttle, David M. (1972) “The Effect of Language Trees on the Acquisition of Generative Capacity in a Foreign Language.” LINGUISTICS SYMPOSIUM: AUTOMATIC LANGUAGE PROCESSING, 29–30 March 1972. Provo, Utah: BYU Language Research Center.[8]

- ^ Olsen, Roydon S. (1980).The Effect of Language Trees in Foreign Language Learning. Masters Thesis:BYU.

- ^ Lytle, Eldon G.(1987) JGPL User Manual. Gardnerville, NV: LinguisticTechnologies, Inc. Corporate document.

- ^ Mathews, Neldon C. and Lytle, E. G. (1986) “Field Test of the WordMAP(TM) Writing Aids System.” Panaca, NV. District document.

- ^ Breland, Hunter M. and Lytle, Eldon G.(1990) “Computer-Assisted Writing Skill Assessment Using WordMAP (TM).” Paper presented at the Annual Meetings of the American Educational Research Association and the National Council on Measurement in Education. Boston, MA).[9]

- ^ Millett, Ronald P.(2006) AUTOMATIC HOLISTIC Scoring OF ESL ESSAYS USING LINGUISTIC MATURITY ATTRIBUTES. MA Thesis: BYU. [10]

- ^ Millet, Ronald and Lonsdale, Deryle (2005). “Expanding Tree Adjoining Grammar to Create Junction Grammar Trees.” Proceedings of the Seventh International Workshop on Tree Adjoining Grammar and Related Formalisms, pg. 162-170; BC, Canada: Simon Fraser University. [11]

- ^ Lytle, Eldon G., "The Evolution of Junction Grammar" (1977). Translation Sciences Institute, Brigham Young University, September 1975, with minor revisions in April 1976, and October, 1977. See `Orders of Specificity.’[12]

- ^ Carroll, John B., editor (1998) Language, Thought, and Reality: Selected Writings of Benjamin Lee Whorf. THE MIT PRESS, pg. 248-49.

- ^ Carroll (1998), pg. 252

- ^ Lytle, Eldon G. (2009) LANGUAGE in Capital Letters (eBook edition), Chapt. 17. Rose Valley NV: Linguistic Technologies, Inc. [13]