Progress in artificial intelligence

| Part of a series on |

| Artificial intelligence |

|---|

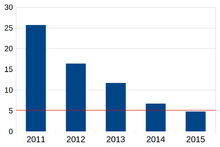

The error rate of AI by year. Red line - the error rate of a trained human on a particular task.

Progress in artificial intelligence (AI) refers to the advances, milestones, and breakthroughs that have been achieved in the field of artificial intelligence over time. AI is a multidisciplinary branch of computer science that aims to create machines and systems capable of performing tasks that typically require human intelligence. Artificial intelligence applications have been used in a wide range of fields including medical diagnosis, economic-financial applications, robot control, law, scientific discovery, video games, and toys. However, many AI applications are not perceived as AI: "A lot of cutting edge AI has filtered into general applications, often without being called AI because once something becomes useful enough and common enough it's not labeled AI anymore."[1][2] "Many thousands of AI applications are deeply embedded in the infrastructure of every industry."[3] In the late 1990s and early 21st century, AI technology became widely used as elements of larger systems,[3][4] but the field was rarely credited for these successes at the time.

Kaplan and Haenlein structure artificial intelligence along three evolutionary stages: 1) artificial narrow intelligence – applying AI only to specific tasks; 2) artificial general intelligence – applying AI to several areas and able to autonomously solve problems they were never even designed for; and 3) artificial super intelligence – applying AI to any area capable of scientific creativity, social skills, and general wisdom.[2]

To allow comparison with human performance, artificial intelligence can be evaluated on constrained and well-defined problems. Such tests have been termed subject matter expert Turing tests. Also, smaller problems provide more achievable goals and there are an ever-increasing number of positive results.

Humans still substantially outperform both GPT-4 and models trained on the ConceptARC benchmark that scored 60% on most, and 77% on one category, while humans 91% on all and 97% on one category.[5]

Current performance in specific areas

[edit]| Game | Champion year[6] | Legal states (log10)[7] | Game tree complexity (log10)[7] | Game of perfect information? | Ref. |

|---|---|---|---|---|---|

| Draughts (checkers) | 1994 | 21 | 31 | Perfect | [8] |

| Othello (reversi) | 1997 | 28 | 58 | Perfect | [9] |

| Chess | 1997 | 46 | 123 | Perfect | |

| Scrabble | 2006 | [10] | |||

| Shogi | 2017 | 71 | 226 | Perfect | [11] |

| Go | 2016 | 172 | 360 | Perfect | |

| 2p no-limit hold 'em | 2017 | Imperfect | [12] | ||

| StarCraft | - | 270+ | Imperfect | [13] | |

| StarCraft II | 2019 | Imperfect | [14] |

There are many useful abilities that can be described as showing some form of intelligence. This gives better insight into the comparative success of artificial intelligence in different areas.

AI, like electricity or the steam engine, is a general-purpose technology. There is no consensus on how to characterize which tasks AI tends to excel at.[15] Some versions of Moravec's paradox observe that humans are more likely to outperform machines in areas such as physical dexterity that have been the direct target of natural selection.[16] While projects such as AlphaZero have succeeded in generating their own knowledge from scratch, many other machine learning projects require large training datasets.[17][18] Researcher Andrew Ng has suggested, as a "highly imperfect rule of thumb", that "almost anything a typical human can do with less than one second of mental thought, we can probably now or in the near future automate using AI."[19]

Games provide a high-profile benchmark for assessing rates of progress; many games have a large professional player base and a well-established competitive rating system. AlphaGo brought the era of classical board-game benchmarks to a close when Artificial Intelligence proved their competitive edge over humans in 2016. Deep Mind's AlphaGo AI software program defeated the world's best professional Go Player Lee Sedol.[20] Games of imperfect knowledge provide new challenges to AI in the area of game theory; the most prominent milestone in this area was brought to a close by Libratus' poker victory in 2017.[21][22] E-sports continue to provide additional benchmarks; Facebook AI, Deepmind, and others have engaged with the popular StarCraft franchise of videogames.[23][24]

Broad classes of outcome for an AI test may be given as:

- optimal: it is not possible to perform better (note: some of these entries were solved by humans)

- super-human: performs better than all humans

- high-human: performs better than most humans

- par-human: performs similarly to most humans

- sub-human: performs worse than most humans

Optimal

[edit]- Tic-tac-toe

- Connect Four: 1988

- Checkers (aka 8x8 draughts): Weakly solved (2007)[25]

- Rubik's Cube: Mostly solved (2010)[26]

- Heads-up limit hold'em poker: Statistically optimal in the sense that "a human lifetime of play is not sufficient to establish with statistical significance that the strategy is not an exact solution" (2015)[27]

Super-human

[edit]- Othello (aka reversi): c. 1997[9]

- Scrabble:[28][29] 2006[10]

- Backgammon: c. 1995–2002[30][31]

- Chess: Supercomputer (c. 1997); Personal computer (c. 2006);[32] Mobile phone (c. 2009);[33] Computer defeats human + computer (c. 2017)[34]

- Jeopardy!: Question answering, although the machine did not use speech recognition (2011)[35][36]

- Arimaa: 2015[37][38]

- Shogi: c. 2017[11]

- Go: 2017[39]

- Heads-up no-limit hold'em poker: 2017[12]

- Six-player no-limit hold'em poker: 2019[40]

- Gran Turismo Sport: 2022[41]

High-human

[edit]- Crosswords: c. 2012[42][43]

- Freeciv: 2016[44]

- Dota 2: 2018[45]

- Bridge card-playing: According to a 2009 review, "the best programs are attaining expert status as (bridge) card players", excluding bidding.[46]

- StarCraft II: 2019[47]

- Mahjong: 2019[48]

- Stratego: 2022[49]

- No-Press Diplomacy: 2022[50]

- Hanabi: 2022[51]

- Natural language processing[citation needed]

Par-human

[edit]- Optical character recognition for ISO 1073-1:1976 and similar special characters.[citation needed]

- Classification of images[52]

- Handwriting recognition[53]

- Facial recognition[54]

- Visual question answering[55]

- SQuAD 2.0 English reading-comprehension benchmark (2019)[56]

- SuperGLUE English-language understanding benchmark (2020)[56]

- Some school science exams (2019)[57]

- Some tasks based on Raven's Progressive Matrices[58]

- Many Atari 2600 games (2015)[59]

Sub-human

[edit]This section needs additional citations for verification. (December 2022) |

- Optical character recognition for printed text (nearing par-human for Latin-script typewritten text)

- Object recognition[clarification needed]

- Various robotics tasks that may require advances in robot hardware as well as AI, including:

- Stable bipedal locomotion: Bipedal robots can walk, but are less stable than human walkers (as of 2017)[60]

- Humanoid soccer[61]

- Speech recognition: "nearly equal to human performance" (2017)[62]

- Explainability. Current medical systems can diagnose certain medical conditions well, but cannot explain to users why they made the diagnosis.[63]

- Many tests of fluid intelligence (2020)[58]

- Bongard visual cognition problems, such as the Bongard-LOGO benchmark (2020)[58][64]

- Visual Commonsense Reasoning (VCR) benchmark (as of 2020)[56]

- Stock market prediction: Financial data collection and processing using Machine Learning algorithms

- Angry Birds video game, as of 2020[65]

- Various tasks that are difficult to solve without contextual knowledge, including:

Proposed tests of artificial intelligence

[edit]In his famous Turing test, Alan Turing picked language, the defining feature of human beings, for its basis.[66] The Turing test is now considered too exploitable to be a meaningful benchmark.[67]

The Feigenbaum test, proposed by the inventor of expert systems, tests a machine's knowledge and expertise about a specific subject.[68] A paper by Jim Gray of Microsoft in 2003 suggested extending the Turing test to speech understanding, speaking and recognizing objects and behavior.[69]

Proposed "universal intelligence" tests aim to compare how well machines, humans, and even non-human animals perform on problem sets that are generic as possible. At an extreme, the test suite can contain every possible problem, weighted by Kolmogorov complexity; however, these problem sets tend to be dominated by impoverished pattern-matching exercises where a tuned AI can easily exceed human performance levels.[70][71][72][73][74]

Exams

[edit]According to OpenAI, in 2023 ChatGPT GPT-4 scored the 90th percentile on the Uniform Bar Exam. On the SATs, GPT-4 scored the 89th percentile on math, and the 93rd percentile in Reading & Writing. On the GREs, it scored on the 54th percentile on the writing test, 88th percentile on the quantitative section, and 99th percentile on the verbal section. It scored in the 99th to 100th percentile on the 2020 USA Biology Olympiad semifinal exam. It scored a perfect "5" on several AP exams.[75]

Independent researchers found in 2023 that ChatGPT GPT-3.5 "performed at or near the passing threshold" for the three parts of the United States Medical Licensing Examination. GPT-3.5 was also assessed to attain a low, but passing, grade from exams for four law school courses at the University of Minnesota.[75] GPT-4 passed a text-based radiology board–style examination.[76][77]

Competitions

[edit]Many competitions and prizes, such as the Imagenet Challenge, promote research in artificial intelligence. The most common areas of competition include general machine intelligence, conversational behavior, data-mining, robotic cars, and robot soccer as well as conventional games.[78]

Past and current predictions

[edit]An expert poll around 2016, conducted by Katja Grace of the Future of Humanity Institute and associates, gave median estimates of 3 years for championship Angry Birds, 4 years for the World Series of Poker, and 6 years for StarCraft. On more subjective tasks, the poll gave 6 years for folding laundry as well as an average human worker, 7–10 years for expertly answering 'easily Googleable' questions, 8 years for average speech transcription, 9 years for average telephone banking, and 11 years for expert songwriting, but over 30 years for writing a New York Times bestseller or winning the Putnam math competition.[79][80][81]

Chess

[edit]

An AI defeated a grandmaster in a regulation tournament game for the first time in 1988; rebranded as Deep Blue, it beat the reigning human world chess champion in 1997 (see Deep Blue versus Garry Kasparov).[82]

| Year prediction made | Predicted year | Number of years | Predictor | Contemporaneous source |

|---|---|---|---|---|

| 1957 | 1967 or sooner | 10 or less | Herbert A. Simon, economist[83] | |

| 1990 | 2000 or sooner | 10 or less | Ray Kurzweil, futurist | Age of Intelligent Machines[84] |

Go

[edit]AlphaGo defeated a European Go champion in October 2015, and Lee Sedol in March 2016, one of the world's top players (see AlphaGo versus Lee Sedol). According to Scientific American and other sources, most observers had expected superhuman Computer Go performance to be at least a decade away.[85][86][87]

| Year prediction made | Predicted year | Number of years | Predictor | Affiliation | Contemporaneous source |

|---|---|---|---|---|---|

| 1997 | 2100 or later | 103 or more | Piet Hutt, physicist and Go fan | Institute for Advanced Study | New York Times[88][89] |

| 2007 | 2017 or sooner | 10 or less | Feng-Hsiung Hsu, Deep Blue lead | Microsoft Research Asia | IEEE Spectrum[90][91] |

| 2014 | 2024 | 10 | Rémi Coulom, Computer Go programmer | CrazyStone | Wired[91][92] |

Human-level artificial general intelligence (AGI)

[edit]AI pioneer and economist Herbert A. Simon inaccurately predicted in 1965: "Machines will be capable, within twenty years, of doing any work a man can do". Similarly, in 1970 Marvin Minsky wrote that "Within a generation... the problem of creating artificial intelligence will substantially be solved."[93]

Four polls conducted in 2012 and 2013 suggested that the median estimate among experts for when AGI would arrive was 2040 to 2050, depending on the poll.[94][95]

The Grace poll around 2016 found results varied depending on how the question was framed. Respondents asked to estimate "when unaided machines can accomplish every task better and more cheaply than human workers" gave an aggregated median answer of 45 years and a 10% chance of it occurring within 9 years. Other respondents asked to estimate "when all occupations are fully automatable. That is, when for any occupation, machines could be built to carry out the task better and more cheaply than human workers" estimated a median of 122 years and a 10% probability of 20 years. The median response for when "AI researcher" could be fully automated was around 90 years. No link was found between seniority and optimism, but Asian researchers were much more optimistic than North American researchers on average; Asians predicted 30 years on average for "accomplish every task", compared with the 74 years predicted by North Americans.[79][80][81]

| Year prediction made | Predicted year | Number of years | Predictor | Contemporaneous source |

|---|---|---|---|---|

| 1965 | 1985 or sooner | 20 or less | Herbert A. Simon | The shape of automation for men and management[93][96] |

| 1993 | 2023 or sooner | 30 or less | Vernor Vinge, science fiction writer | "The Coming Technological Singularity"[97] |

| 1995 | 2040 or sooner | 45 or less | Hans Moravec, robotics researcher | Wired[98] |

| 2008 | Never / Distant future[note 1] | Gordon E. Moore, inventor of Moore's Law | IEEE Spectrum[99] | |

| 2017 | 2029 | 12 | Ray Kurzweil | Interview[100] |

See also

[edit]- Applications of artificial intelligence

- List of artificial intelligence projects

- List of emerging technologies

References

[edit]- ^ AI set to exceed human brain power Archived 2008-02-19 at the Wayback Machine CNN.com (July 26, 2006)

- ^ a b Kaplan, Andreas; Haenlein, Michael (2019). "Siri, Siri, in my hand: Who's the fairest in the land? On the interpretations, illustrations, and implications of artificial intelligence". Business Horizons. 62: 15–25. doi:10.1016/j.bushor.2018.08.004. S2CID 158433736.

- ^ a b Kurtzweil 2005, p. 264

- ^ National Research Council (1999), "Developments in Artificial Intelligence", Funding a Revolution: Government Support for Computing Research, National Academy Press, ISBN 978-0-309-06278-7, OCLC 246584055 under "Artificial Intelligence in the 90s"

- ^ Biever, Celeste (25 July 2023). "ChatGPT broke the Turing test — the race is on for new ways to assess AI". Nature. Retrieved 26 July 2023.

- ^ Approximate year AI started beating top human experts

- ^ a b van den Herik, H.Jaap; Uiterwijk, Jos W.H.M.; van Rijswijck, Jack (January 2002). "Games solved: Now and in the future". Artificial Intelligence. 134 (1–2): 277–311. doi:10.1016/S0004-3702(01)00152-7.

- ^ Madrigal, Alexis C. (2017). "How Checkers Was Solved". The Atlantic. Archived from the original on 6 May 2018. Retrieved 6 May 2018.

- ^ a b "www.othello-club.de". berg.earthlingz.de. Archived from the original on 2018-07-15. Retrieved 2018-07-15.

- ^ a b Webley, Kayla (15 February 2011). "Top 10 Man-vs.-Machine Moments". Time. Archived from the original on 26 December 2017. Retrieved 28 December 2017.

- ^ a b "Shogi prodigy breathes new life into the game | The Japan Times". The Japan Times. Archived from the original on 2018-07-15. Retrieved 2018-07-15.

- ^ a b Brown, Noam; Sandholm, Tuomas (2017). "Superhuman AI for heads-up no-limit poker: Libratus beats top professionals". Science. 359 (6374): 418–424. Bibcode:2018Sci...359..418B. doi:10.1126/science.aao1733. PMID 29249696.

- ^ "Facebook Quietly Enters StarCraft War for AI Bots, and Loses". WIRED. 2017. Archived from the original on 7 May 2018. Retrieved 6 May 2018.

- ^ Sample, Ian (30 October 2019). "AI becomes grandmaster in 'fiendishly complex' StarCraft II". The Guardian. Archived from the original on 29 December 2020. Retrieved 28 February 2020.

- ^ Brynjolfsson, Erik; Mitchell, Tom (22 December 2017). "What can machine learning do? Workforce implications". Science. 358 (6370): 1530–1534. Bibcode:2017Sci...358.1530B. doi:10.1126/science.aap8062. PMID 29269459. S2CID 4036151. Archived from the original on 29 September 2021. Retrieved 7 May 2018.

- ^ "IKEA furniture and the limits of AI". The Economist. 2018. Archived from the original on 24 April 2018. Retrieved 24 April 2018.

- ^ Sample, Ian (18 October 2017). "'It's able to create knowledge itself': Google unveils AI that learns on its own". the Guardian. Archived from the original on 19 October 2017. Retrieved 7 May 2018.

- ^ "The AI revolution in science". Science | AAAS. 5 July 2017. Archived from the original on 14 December 2021. Retrieved 7 May 2018.

- ^ "Will your job still exist in 10 years when the robots arrive?". South China Morning Post. 2017. Archived from the original on 7 May 2018. Retrieved 7 May 2018.

- ^ Mokyr, Joel (2019-11-01). "The Technology Trap: Capital Labor, and Power in the Age of Automation. By Carl Benedikt Frey. Princeton: Princeton University Press, 2019. Pp. 480. $29.95, hardcover". The Journal of Economic History. 79 (4): 1183–1189. doi:10.1017/s0022050719000639. ISSN 0022-0507. S2CID 211324400. Archived from the original on 2023-02-02. Retrieved 2020-11-25.

- ^ Borowiec, Tracey Lien, Steven (2016). "AlphaGo beats human Go champ in milestone for artificial intelligence". Los Angeles Times. Archived from the original on 13 May 2018. Retrieved 7 May 2018.

{{cite news}}: CS1 maint: multiple names: authors list (link) - ^ Brown, Noam; Sandholm, Tuomas (26 January 2018). "Superhuman AI for heads-up no-limit poker: Libratus beats top professionals". Science. 359 (6374): 418–424. Bibcode:2018Sci...359..418B. doi:10.1126/science.aao1733. PMID 29249696. S2CID 5003977.

- ^ Ontanon, Santiago; Synnaeve, Gabriel; Uriarte, Alberto; Richoux, Florian; Churchill, David; Preuss, Mike (December 2013). "A Survey of Real-Time Strategy Game AI Research and Competition in StarCraft". IEEE Transactions on Computational Intelligence and AI in Games. 5 (4): 293–311. CiteSeerX 10.1.1.406.2524. doi:10.1109/TCIAIG.2013.2286295. S2CID 5014732.

- ^ "Facebook Quietly Enters StarCraft War for AI Bots, and Loses". WIRED. 2017. Archived from the original on 2 February 2023. Retrieved 7 May 2018.

- ^ Schaeffer, J.; Burch, N.; Bjornsson, Y.; Kishimoto, A.; Muller, M.; Lake, R.; Lu, P.; Sutphen, S. (2007). "Checkers is solved". Science. 317 (5844): 1518–1522. Bibcode:2007Sci...317.1518S. CiteSeerX 10.1.1.95.5393. doi:10.1126/science.1144079. PMID 17641166. S2CID 10274228.

- ^ "God's Number is 20". Archived from the original on 2013-07-21. Retrieved 2011-08-07.

- ^ Bowling, M.; Burch, N.; Johanson, M.; Tammelin, O. (2015). "Heads-up limit hold'em poker is solved". Science. 347 (6218): 145–9. Bibcode:2015Sci...347..145B. CiteSeerX 10.1.1.697.72. doi:10.1126/science.1259433. PMID 25574016. S2CID 3796371.

- ^ "In Major AI Breakthrough, Google System Secretly Beats Top Player at the Ancient Game of Go". WIRED. Archived from the original on 2 February 2017. Retrieved 28 December 2017.

- ^ Sheppard, B. (2002). "World-championship-caliber Scrabble". Artificial Intelligence. 134 (1–2): 241–275. doi:10.1016/S0004-3702(01)00166-7.

- ^ Tesauro, Gerald (March 1995). "Temporal difference learning and TD-Gammon". Communications of the ACM. 38 (3): 58–68. doi:10.1145/203330.203343. S2CID 8763243. Archived from the original on 2013-01-11. Retrieved 2008-03-26.

- ^ Tesauro, Gerald (January 2002). "Programming backgammon using self-teaching neural nets". Artificial Intelligence. 134 (1–2): 181–199. doi:10.1016/S0004-3702(01)00110-2.

...at least two other neural net programs also appear to be capable of superhuman play

- ^ "Kramnik vs Deep Fritz: Computer wins match by 4:2". Chess News. 2006-12-05. Archived from the original on 2018-11-25. Retrieved 2018-07-15.

- ^ "The Week in Chess 771". theweekinchess.com. Archived from the original on 2018-11-15. Retrieved 2018-07-15.

- ^ Nickel, Arno (May 2017). "Zor Winner in an Exciting Photo Finish". www.infinitychess.com. Innovative Solutions. Archived from the original on 2018-08-17. Retrieved 2018-07-17.

... on third place the best centaur ...

- ^ Markoff, John (2011-02-16). "Computer Wins on 'Jeopardy!': Trivial, It's Not". The New York Times. ISSN 0362-4331. Retrieved 2023-02-22.

- ^ Jackson, Joab. "IBM Watson Vanquishes Human Jeopardy Foes". PC World. IDG News. Archived from the original on 2011-02-20. Retrieved 2011-02-17.

- ^ "The Arimaa Challenge". arimaa.com. Archived from the original on 2010-03-22. Retrieved 2018-07-15.

- ^ Roeder, Oliver (10 July 2017). "The Bots Beat Us. Now What?". FiveThirtyEight. Archived from the original on 28 December 2017. Retrieved 28 December 2017.

- ^ "AlphaGo beats Ke Jie again to wrap up three-part match". The Verge. Archived from the original on 2018-07-15. Retrieved 2018-07-15.

- ^ Blair, Alan; Saffidine, Abdallah (30 August 2019). "AI surpasses humans at six-player poker". Science. 365 (6456): 864–865. Bibcode:2019Sci...365..864B. doi:10.1126/science.aay7774. PMID 31467208. S2CID 201672421. Archived from the original on 18 July 2022. Retrieved 30 June 2022.

- ^ "Sony's new AI driver achieves 'reliably superhuman' race times in Gran Turismo". The Verge. Archived from the original on 2022-07-20. Retrieved 2022-07-19.

- ^ Proverb: The probabilistic cruciverbalist. By Greg A. Keim, Noam Shazeer, Michael L. Littman, Sushant Agarwal, Catherine M. Cheves, Joseph Fitzgerald, Jason Grosland, Fan Jiang, Shannon Pollard, and Karl Weinmeister. 1999. In Proceedings of the Sixteenth National Conference on Artificial Intelligence, 710-717. Menlo Park, Calif.: AAAI Press.

- ^ Wernick, Adam (24 Sep 2014). "'Dr. Fill' vies for crossword solving supremacy, but still comes up short". Public Radio International. Archived from the original on 2017-12-28. Retrieved Dec 27, 2017.

The first year, Dr. Fill came in 141st out of about 600 competitors. It did a little better the second-year; last year it was 65th

- ^ "Arago's AI can now beat some human players at complex civ strategy games". TechCrunch. 6 December 2016. Archived from the original on 5 June 2022. Retrieved 20 July 2022.

- ^ "AI bots trained for 180 years a day to beat humans at Dota 2". The Verge. 25 June 2018. Archived from the original on 25 June 2018. Retrieved 17 July 2018.

- ^ Bethe, P. M. (2009). The state of automated bridge play.

- ^ "AlphaStar: Mastering the Real-Time Strategy Game StarCraft II". 24 January 2019. Archived from the original on 2022-07-22. Retrieved 2022-07-19.

- ^ "Suphx: The World Best Mahjong AI". Microsoft. Archived from the original on 2022-07-19. Retrieved 2022-07-19.

- ^ "Deepmind AI Researchers Introduce 'DeepNash', An Autonomous Agent Trained With Model-Free Multiagent Reinforcement Learning That Learns To Play The Game Of Stratego At Expert Level". MarkTechPost. 9 July 2022. Archived from the original on 9 July 2022. Retrieved 19 July 2022.

- ^ Bakhtin, Anton; Wu, David; Lerer, Adam; Gray, Jonathan; Jacob, Athul; Farina, Gabriele; Miller, Alexander; Brown, Noam (11 October 2022). "Mastering the Game of No-Press Diplomacy via Human-Regularized Reinforcement Learning and Planning". arXiv:2210.05492 [cs.GT].

- ^ Hu, Hengyuan; Wu, David; Lerer, Adam; Foerster, Jakob; Brown, Noam (11 October 2022). "Human-AI Coordination via Human-Regularized Search and Learning". arXiv:2210.05125 [cs.AI].

- ^ "Microsoft researchers say their newest deep learning system beats humans -- and Google - VentureBeat - Big Data - by Jordan Novet". VentureBeat. 2015-02-10. Archived from the original on 2017-08-09. Retrieved 2017-09-08.

- ^ Santoro, Adam; Bartunov, Sergey; Botvinick, Matthew; Wierstra, Daan; Lillicrap, Timothy (19 May 2016). "One-shot Learning with Memory-Augmented Neural Networks". p. 5, Table 1. arXiv:1605.06065 [cs.LG].

4.2. Omniglot Classification: "The network exhibited high classification accuracy on just the second presentation of a sample from a class within an episode (82.8%), reaching up to 94.9% accuracy by the fifth instance and 98.1% accuracy by the tenth.

- ^ "Man Versus Machine: Who Wins When It Comes to Facial Recognition?". Neuroscience News. 2018-12-03. Archived from the original on 2022-07-20. Retrieved 2022-07-20.

- ^ Yan, Ming; Xu, Haiyang; Li, Chenliang; Tian, Junfeng; Bi, Bin; Wang, Wei; Chen, Weihua; Xu, Xianzhe; Wang, Fan; Cao, Zheng; Zhang, Zhicheng; Zhang, Qiyu; Zhang, Ji; Huang, Songfang; Huang, Fei; Si, Luo; Jin, Rong (17 November 2021). "Achieving Human Parity on Visual Question Answering". arXiv:2111.08896 [cs.CL].

- ^ a b c Zhang, D., Mishra, S., Brynjolfsson, E., Etchemendy, J., Ganguli, D., Grosz, B., ... & Perrault, R. (2021). The AI index 2021 annual report. AI Index (Stanford University). arXiv preprint arXiv:2103.06312.

- ^ Metz, Cade (4 September 2019). "A Breakthrough for A.I. Technology: Passing an 8th-Grade Science Test". The New York Times. Archived from the original on 5 January 2023. Retrieved 5 January 2023.

- ^ a b c van der Maas, Han L.J.; Snoek, Lukas; Stevenson, Claire E. (July 2021). "How much intelligence is there in artificial intelligence? A 2020 update". Intelligence. 87: 101548. doi:10.1016/j.intell.2021.101548. S2CID 236236331.

- ^ McMillan, Robert (2015). "Google's AI Is Now Smart Enough to Play Atari Like the Pros". Wired. Archived from the original on 5 January 2023. Retrieved 5 January 2023.

- ^ "Robots with legs are getting ready to walk among us". The Verge. Archived from the original on 28 December 2017. Retrieved 28 December 2017.

- ^ Hurst, Nathan. "Why Funny, Falling, Soccer-Playing Robots Matter". Smithsonian. Archived from the original on 28 December 2017. Retrieved 28 December 2017.

- ^ "The Business of Artificial Intelligence". Harvard Business Review. 18 July 2017. Archived from the original on 29 December 2017. Retrieved 28 December 2017.

- ^ Brynjolfsson, E., & Mitchell, T. (2017). What can machine learning do? Workforce implications. Science, 358(6370), 1530-1534.

- ^ Nie, W., Yu, Z., Mao, L., Patel, A. B., Zhu, Y., & Anandkumar, A. (2020). Bongard-logo: A new benchmark for human-level concept learning and reasoning. Advances in Neural Information Processing Systems, 33, 16468-16480.

- ^ Stephenson, Matthew; Renz, Jochen; Ge, Xiaoyu (March 2020). "The computational complexity of Angry Birds". Artificial Intelligence. 280: 103232. arXiv:1812.07793. doi:10.1016/j.artint.2019.103232. S2CID 56475869.

Despite many different attempts over the past five years the problem is still largely unsolved, with AI approaches far from human-level performance.

- ^ Turing, Alan (October 1950), "Computing Machinery and Intelligence", Mind, LIX (236): 433–460, doi:10.1093/mind/LIX.236.433, ISSN 0026-4423

- ^ Schoenick, Carissa; Clark, Peter; Tafjord, Oyvind; Turney, Peter; Etzioni, Oren (23 August 2017). "Moving beyond the Turing Test with the Allen AI Science Challenge". Communications of the ACM. 60 (9): 60–64. arXiv:1604.04315. doi:10.1145/3122814. S2CID 6383047.

- ^ Feigenbaum, Edward A. (2003). "Some challenges and grand challenges for computational intelligence". Journal of the ACM. 50 (1): 32–40. doi:10.1145/602382.602400. S2CID 15379263.

- ^ Gray, Jim (2003). "What Next? A Dozen Information-Technology Research Goals". Journal of the ACM. 50 (1): 41–57. arXiv:cs/9911005. Bibcode:1999cs.......11005G. doi:10.1145/602382.602401. S2CID 10336312.

- ^ Hernandez-Orallo, Jose (2000). "Beyond the Turing Test". Journal of Logic, Language and Information. 9 (4): 447–466. doi:10.1023/A:1008367325700. S2CID 14481982.

- ^ Kuang-Cheng, Andy Wang (2023). "International licensing under an endogenous tariff in vertically-related markets". Journal of Economics. Retrieved 2023-04-23.

- ^ Dowe, D. L.; Hajek, A. R. (1997). "A computational extension to the Turing Test". Proceedings of the 4th Conference of the Australasian Cognitive Science Society. Archived from the original on 28 June 2011.

- ^ Hernandez-Orallo, J.; Dowe, D. L. (2010). "Measuring Universal Intelligence: Towards an Anytime Intelligence Test". Artificial Intelligence. 174 (18): 1508–1539. CiteSeerX 10.1.1.295.9079. doi:10.1016/j.artint.2010.09.006.

- ^ Hernández-Orallo, José; Dowe, David L.; Hernández-Lloreda, M.Victoria (March 2014). "Universal psychometrics: Measuring cognitive abilities in the machine kingdom". Cognitive Systems Research. 27: 50–74. doi:10.1016/j.cogsys.2013.06.001. hdl:10251/50244. S2CID 26440282.

- ^ a b Varanasi, Lakshmi (March 2023). "AI models like ChatGPT and GPT-4 are acing everything from the bar exam to AP Biology. Here's a list of difficult exams both AI versions have passed". Business Insider. Retrieved 22 June 2023.

- ^ Rudy, Melissa (24 May 2023). "Latest version of ChatGPT passes radiology board-style exam, highlights AI's 'growing potential,' study finds". Fox News. Retrieved 22 June 2023.

- ^ Bhayana, Rajesh; Bleakney, Robert R.; Krishna, Satheesh (1 June 2023). "GPT-4 in Radiology: Improvements in Advanced Reasoning". Radiology. 307 (5): e230987. doi:10.1148/radiol.230987. PMID 37191491. S2CID 258716171.

- ^ "ILSVRC2017". image-net.org. Archived from the original on 2018-11-02. Retrieved 2018-11-06.

- ^ a b Gray, Richard (2018). "How long will it take for your job to be automated?". BBC. Archived from the original on 11 January 2018. Retrieved 31 January 2018.

- ^ a b "AI will be able to beat us at everything by 2060, say experts". New Scientist. 2018. Archived from the original on 31 January 2018. Retrieved 31 January 2018.

- ^ a b Grace, K., Salvatier, J., Dafoe, A., Zhang, B., & Evans, O. (2017). When will AI exceed human performance? Evidence from AI experts. arXiv preprint arXiv:1705.08807.

- ^ McClain, Dylan Loeb (11 September 2010). "Bent Larsen, Chess Grandmaster, Dies at 75". The New York Times. Archived from the original on 25 March 2014. Retrieved 31 January 2018.

- ^ "The Business of Artificial Intelligence". Harvard Business Review. 18 July 2017. Archived from the original on 18 January 2018. Retrieved 31 January 2018.

- ^ "4 Crazy Predictions About the Future of Art". Inc.com. 2017. Archived from the original on 12 September 2017. Retrieved 31 January 2018.

- ^ Koch, Christof (2016). "How the Computer Beat the Go Master". Scientific American. Archived from the original on 6 September 2017. Retrieved 31 January 2018.

- ^ "'I'm in shock!' How an AI beat the world's best human at Go". New Scientist. 2016. Archived from the original on 13 May 2016. Retrieved 31 January 2018.

- ^ Moyer, Christopher (2016). "How Google's AlphaGo Beat a Go World Champion". The Atlantic. Archived from the original on 31 January 2018. Retrieved 31 January 2018.

- ^ Johnson, George (29 July 1997). "To Test a Powerful Computer, Play an Ancient Game". The New York Times. Archived from the original on 31 January 2018. Retrieved 31 January 2018.

- ^ Johnson, George (4 April 2016). "To Beat Go Champion, Google's Program Needed a Human Army". The New York Times. Archived from the original on 31 January 2018. Retrieved 31 January 2018.

- ^ "Cracking GO". IEEE Spectrum: Technology, Engineering, and Science News. 2007. Archived from the original on 31 January 2018. Retrieved 31 January 2018.

- ^ a b "The Mystery of Go, the Ancient Game That Computers Still Can't Win". WIRED. 2014. Archived from the original on 31 January 2016. Retrieved 31 January 2018.

- ^ Gibney, Elizabeth (28 January 2016). "Google AI algorithm masters ancient game of Go". Nature. 529 (7587): 445–446. Bibcode:2016Natur.529..445G. doi:10.1038/529445a. PMID 26819021. S2CID 4460235.

- ^ a b Bostrom, Nick (2013). Superintelligence. Oxford: Oxford University Press. ISBN 978-0199678112.

- ^ Khatchadourian, Raffi (16 November 2015). "The Doomsday Invention". The New Yorker. Archived from the original on 29 April 2019. Retrieved 31 January 2018.

- ^ Müller, V. C., & Bostrom, N. (2016). Future progress in artificial intelligence: A survey of expert opinion. In Fundamental issues of artificial intelligence (pp. 555-572). Springer, Cham.

- ^ Muehlhauser, L., & Salamon, A. (2012). Intelligence explosion: Evidence and import. In Singularity Hypotheses (pp. 15-42). Springer, Berlin, Heidelberg.

- ^ Tierney, John (25 August 2008). "Vernor Vinge's View of the Future - Is Technology That Outthinks Us a Partner or a Master ?". The New York Times. Archived from the original on 24 December 2017. Retrieved 31 January 2018.

- ^ "Superhumanism". WIRED. 1995. Archived from the original on 2 September 2017. Retrieved 31 January 2018.

- ^ "Tech Luminaries Address Singularity". IEEE Spectrum: Technology, Engineering, and Science News. 2008. Archived from the original on 30 April 2019. Retrieved 31 January 2018.

- ^ Molloy, Mark (17 March 2017). "Expert predicts date when 'sexier and funnier' humans will merge with AI machines". The Telegraph. Archived from the original on 31 January 2018. Retrieved 31 January 2018.

Notes

[edit]- ^ IEEE Spectrum attributes to Moore both "Never" and "I don't believe this kind of thing is likely to happen, at least for a long time"